Artificial Intelligence How can artificial intelligence be used in political communication?

ChatGPT, Jasper, Uizard and Midjourney - AI applications abound. We investigate whether and how they can be useful in political communication.

Artificial intelligence is currently omnipresent in feature articles, business magazines and talk shows. Artificial intelligence will write homework from now on, replace all jobs and determine our lives - at least if you believe the narrative. Artificial intelligence is also making its way into the world of political communication. According to a survey, out of 297 communication professionals from companies, organisations and PR agencies surveyed, 59% use artificial intelligence.

But what can artifical intelligence really do? Which tools are currently on the market and how can they make work more efficient? We asked our experts from graphics, social media editing and text/concept and provide an overview of the possibilities and limitations of AI-based applications for socio-political campaigns and content.

Editorial Assistant ChatGPT

Convincing socio-political campaigns usually start with a problem or a grievance or pursue a concrete goal. Then it is a matter of identifying target groups and a hook. Once all this is in place, the next step is to develop communication concepts and creative ideas. Whether claim, wording and tonality, landing pages, brochures or giveaways - a good campaign is the interplay of many different parts that interlock. Right from the start: no AI in the world can do this for us.

However, AI tools can be a help with individual steps and support with small, sometimes tedious subtasks. If you enter all the necessary information, ChatGPT can prescribe emails, support with SEO or create schedules for an event. Here, of course, care should always be taken that no confidential or personal data is entered. Because ChatGPT uses this data to learn from it.

The tool ChatFlash by Neuroflash offers an – according to its own information – GDPR-compliant alternative. As a German company, Neuroflash is subject to European data protection standards and specialises in German language use. Like ChatGPT, ChatFlash is also based on the GPT-3.5 language model, which strings together words based on probabilities to generate texts. This results in texts that are already very close to human-written material, but have a rather monotonous writing style and can be riddled with errors.

AI Tools to Help With Design

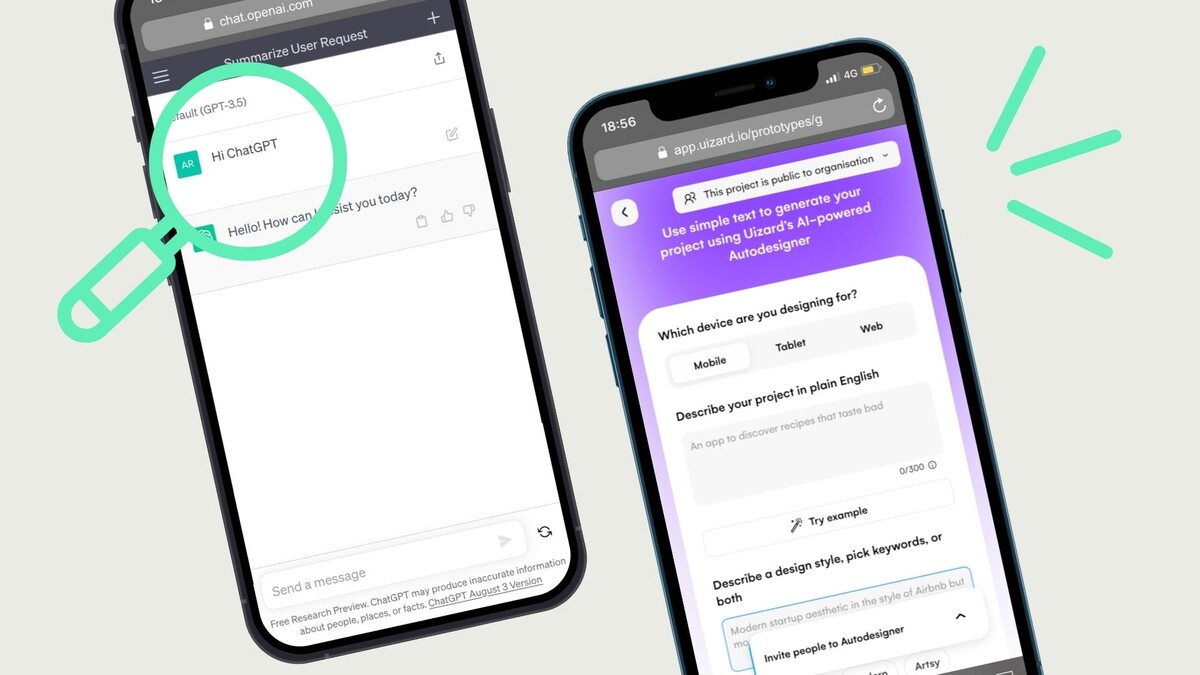

In design, tools such as Midjourney, Framer or Uizard

can also help to create creative products such as visuals, logos, websites or apps.

An initial brainstorming session with the help of AI can open up new inspirations and approaches and kick-start creativity. In addition, time-consuming tasks such as image editing, layout creation or typography selection can be automated by AI tools, so that designers can fully concentrate on the creative aspects of their work.

The power of artificial intelligence can also be applied to the design of user interfaces and the related user experience (UX): By analysing user data, the behaviour of users can be predicted. For example, tools like The Grid automatically create web pages that are tailored to users' preferences and needs.

The tool Framer then helps to test designs. It can simulate realistic interactions and click paths based on existing user data, identifying problem areas of a new design before it goes live.

However, no AI can deliver completely new designs. This is because AI tools use a data set of existing images as the basis for their learning process. This can lead to great difficulties because the dataset used, in the case of Midjourney for example LAION-5B, often consists of billions of images that have been searched for and stored on the internet via "web crawling" in combination with text attributes. The problem with this is that there is no curated selection of data either when the dataset is created or before it is used as a training basis for AI tools. Thus, among the images are billions of copyrighted artworks, photographs and confidential medical records for which no license has been obtained. Therefore, AI-generated imagery should be treated with caution.

Brandbastion, Jasper and Ocoya

And in social media marketing? The analysis of a large amount of data is the greatest strength of AI tools. In social media marketing, large quantities of posts on platforms such as Twitter, Facebook or Instagram can be examined in a short time with the help of algorithms. Tools like Brandbastion, Jasper or Ocoya for example, identify opinions, uncover trends and analyse the behaviour of users. In this way, relevant topics and trends can already be filtered out before the campaign planning and used specifically for the success of the campaign.

However, it should always be noted that social media posts are often unstructured and inaccurate, which can lead to erroneous results. Fake news and shitstorms in particular are a constant problem, making analysis difficult for AI tools. While intelligent filtering can deliver high accuracy, it can also miss critical news. Therefore, critical examination of the results is particularly important when working with AI.

AI tools can also support the daily tasks in social media marketing by providing inspiration in content creation, enabling upload planning for different channels and creating automated reports from which potential for improvement can be derived.

Fake News on the rise?

However, despite the numerous advantages that AI applications offer, they also come up against limits that should be considered when using them in socio-political communication.

An important issue here is the ethics of using AI tools. In political communication, there are repeated examples of how AI tools are misused to manipulate opinions or spread misinformation. The AfD for example, used AI-generated images for its agitation against refugees. And the founder of the research network Bellingcat, Eliot Higgins, used the AI application Midjourney to create images of a fictitious arrest of Trump.

These examples show that the use of AI is still a double-edged sword. Misuse and misinformation are major risks of the technology. Therefore, not only the quality and reliability of the data analysed by AI, but also the end result should always be critically examined.

In addition, there are still many ambiguities on the topic of data protection and data security, as almost no model discloses the data sets fed into it or the way in which the AI is trained. Accordingly, it is unclear whether there is even a legal basis for the use of AI products. Moreover, many of the AI applications currently available, such as ChatGPT, use the data entered by users in order to learn from it. Although this can now be prevented if desired after heavy criticism of ChatGPT, this does not work retroactively and with many other tools.

Finally, resource consumption should also be considered when using AI applications. Running data centres requires large amounts of electricity and water to run and cool the servers. Experts estimate that training a single AI model generates around 300,000 kg of CO2 equivalents. Half a litre of water is also consumed per conversation with ChatGPT, which averages 20-50 questions. Especially in areas where water is scarce, this can lead to difficulties.

Our conclusion?

At a time when socio-political communication is becoming increasingly complex, AI tools can provide valuable support. In addition to a variety of ways to analyse data, they can take over (sometimes tedious) routine tasks and provide inspiration in the creation of creative content. Used correctly, tools such as ChatGPT, Jasper AI or Uizard can increase productivity and enable more efficient work.

However, to achieve the best results, it is important to recognise the limitations and challenges of these tools. Despite the possibilities offered by AI tools, the role of a good agency remains essential for socio-political communication. Trained staff can interpret, filter and contextualise the results of AI tools. They can also set ethical guidelines and ensure that the tools used are used responsibly and transparently. An agency can use its expertise and experience to help understand complex policy contexts and make strategic decisions.

Gerne beraten wir Sie bei der effektiven Einbindung von KI-Tools in Ihre Prozesse. Egal, ob Sie Unterstützung im Social Media Management wünschen oder Ihre Design- und Text-Prozesse verschlanken möchten, gemeinsam finden wir die passende Strategie.